chkno 6 months ago • 100%

chkno 10 months ago • 100%

Say more?

NixOS supports headless LUKS, which was an improvement for me in my last distro-hop. The NixOS wiki even has an example of running a TOR Onion service from initrd to accept a LUKS unlock credential.

chkno 11 months ago • 100%

Nice.

Here's another worked example of a less adventurous pi pico (W) project I did recently. It's C, built with Nix, and doesn't require setting up all the hardware-debugger stuff (it uses the much simpler hold-bootsel-while-plugging-in and copy-the-.uf2-file mechanism to load code). The 5th commit is the simple blink example from the SDK with all the build mechanisms figured out.

chkno 11 months ago • 100%

Bumping package versions usually isn't hard. Here, I'll do this one out loud here, & maybe you can do it next time you need to:

- Search https://github.com/NixOS/nixpkgs/pulls to see if someone else already has a PR open for a version bump for this package.

- Clone the nixpkgs repo if you haven't already:

git clone https://github.com/NixOS/nixpkgs.git ~/devel/nixpkgs(orgit pullif you have). - Create a branch for this bump:

git checkout -b stremio - Find stremio:

find pkgs -name stremio - Edit it:

$EDITOR pkgs/applications/video/stremio/default.nixLooks like nixpkgs has version 4.4.142. If I go to https://www.stremio.com/ (link inmeta.homepagein this file) and click 'Download', it all says 4.4, which is not helpful. The 'source code' link goes to github, and the 'tags' link there lists versionv4.4.164, which is what we're looking for. - In my editor, I change the version:

4.4.142→4.4.164. - In my editor, I mess up both the hashes: I just add a block of zeros somewhere in the middle:

sha256-OyuTFmEIC8PH4PDzTMn8ibLUAzJoPA/fTILee0xpgQI=→sha256-OyuTFmEIC80000000000000000000A/fTILee0xpgQI=. - Leaving my editor, I build the updated package:

nix-build . -A stremio - It fails, because the hashes are wrong, obviously. But it tells me what hash it got, which I copy/paste back in, in the spirit of collective TOFU. I do this twice, once for each hash.

- It builds successfully. I test the result:

./result/bin/stremio. Looks like it works enough to prompt me to log in, at least. I don't know what stremio is or have an account, but it's probably fine. - I commit my change:

git commit -a -m 'stremio: 4.4.142 -> 4.4.164' - I push my commit:

git push github(If this is your first time, create a fork of nixpkgs in the github web UI &git remote adda remote for it first) - I create PR in the github web UI: https://github.com/NixOS/nixpkgs/pull/263387

Or push-ups with someone sitting on your shoulders? I'm interested in reading about this type of exercise — what forms people have come up with that are safe, effective, and fun. What is it called, so that I can search for it?

chkno 1 year ago • 100%

I use Nix (not yet NixOS) on a Librem 5. Debian stable is so old, so it's nice to have Nix as an alternative.

chkno 1 year ago • 100%

Thanks for the lead!

It looks like the Buddy Read feature does in fact start with a specific book and organize a group around it, but it invites me to specify all the people that will ever be in the group right away, at group creation time. I get three ways to invite people:

- "Machine-learning powered reading buddy recommendations" - Unspecified voodoo. Three users are shown.

- "Community members who have this book on their radar" - Probably folks that have this on their public 'to read' list. Three users are shown.

- Specifying users directly by username

This doesn't quite fit the "I'm up for this, let me know when it starts" mechanic.

I could create a new group & invite all three of the users with this book in their public 'to read' list, but I think folks treat the the 'to read' list very, very casually -- not at the "I'm ready to commit to a reading group" level. These three users have 723, 2749, and 3771 books on their 'to read' lists respectively. I see that I somehow have have 46 books on mine, & haven't been thinking of it as a 'ready to commit to reading group' list.

I have a specific book I've been meaning to read for awhile. I've heard that while it's a great journey, it's a dense / heavy / slow read along the way. It sounds like it'd be fun to read it together with a group of likewise interested folks. Is there a service for pulling together reading groups around specific books, rather than the more common way of gathering a group of people and then selecting books? I'm imagining a website that has a sign-up page for ~every book and when ~10 people sign up for a book they all get an email introducing them to each other. Like if there was a bus stop for every book & when enough people had gathered, a bus appears & they depart together. Given list of all the books, this seems like a pretty easy thing to make. Does it exist yet?

chkno 1 year ago • 100%

Thank you for sharing updates about your progress. Good luck rummaging around in found.000. :(

chkno 1 year ago • 100%

Regulation is slow, full of drama, scales poorly, & can result in a legal thicket that teams of lawyers can navigate better than the individuals it's intended to advocate for. Decriminalizing interoperability is faster & can handle most of the small/simple cases, freeing up our community/legislative resources to focus on the most important regulatory needs.

chkno 1 year ago • 100%

Yeah, that's normal. That's the seam -- where each layer starts/stops. Yours don't look any worse than mine.

Sometimes you can tweak settings to reduce them a bit, but the only way to avoid them completely is to print in spiral/vase mode (which is very limiting: 1 contiguous perimeter, no infill).

More importantly: You can control where they appear on the part! Your slicer may have settings like 'nearest' , 'random', 'aligned', 'rear', or may have a way to paint on the part in the UI where the seams should be. Seams are clearly visible when they're in the middle of an otherwise-smooth expanse like the side of your boat there, but are barely noticeable if you put them on a corner.

chkno 1 year ago • 80%

X11 for xdotool. ydotool doesn't support (& can't really support with it's current architecture) retrieving information like the current mouse location, current window, window dimensions & titles. Also, normal (unprivileged) user ydotool use requires udev rules or session scripts and/or running a ydotool daemon & many distros don't yet ship with this Just Working.

X11 for Alt-F2 r to restart Gnome Shell without ending the whole session. This is a useful workaround for a variety of Gnome bugs.

Gmail prompt to provide phone number sounds like a threat

When it's hot during the day and cold at night, I sometimes find myself under-dressed for late evening riding. I can pedal harder to generate body heat, but on flat ground that creates wind chill & doesn't help. Pedaling hard while lightly holding the brakes works really well to warm up! But the downhill-biking folks warn about the hazards of overheating brakes (mostly [disc brakes](https://www.google.com/search?q=downhill+biking+brakes+overheat) but also [rim brakes / V-brakes](https://www.roadbikerider.com/how-can-i-control-descents-without-overheating-rims-d1/)). I have V-brakes. I imagine just pedaling into brakes transfers heat into them much slowly than controlling downhill descents, since I can go down hills much faster than I can go up hills (it takes much longer to transfer one hill's worth of energy from my muscles into having climbed the hill than to transfer the same one hill's worth of energy into the brakes/rims while descending it). Do I need to worry about this at all?

chkno 1 year ago • 100%

There are so many ways do handle backups, so many tools, etc. You'll find something that works for you.

In the spirit of sharing a neat tool that works well for me, addressing many of the concerns you raised, in case it might work for you too: Maybe check out git annex. Especially if you already know git, and maybe even if you don't yet.

I have one huge git repository that in spirit holds all my stuff. All my storage devices have a check-out of this git repo. So all my storage devices know about all my files, but only contain some of them (files not present show up as dangling symlinks). git annex tracks which drives have which data and enforces policies like "all data must live on at least two drives" and "this more-important data must live on at least three drives" by refusing to delete copies unless it can verify that enough other copies exist elsewhere.

- I can always see everything I'm tracking -- the filenames and directory structure for everything are on every drive.

- I don't have to keep track of where things live. When I want to find something, I can just ask which drives it's on.

- (I also have one machine with a bunch of drives in it which I union-mount together, then NFS mount from other machines, as a way to care even less where individual files live)

- Running

git annex fsckon a drive will verify that- All the content that's supposed to live on that drive is in fact present and has the correct sha256 checksum, and

- All policies are satisfied -- all files have enough copies.

chkno 1 year ago • 100%

chkno 1 year ago • 100%

I ran Gentoo for ~15 years and then switched to NixOS ~3 years ago. The last straw was Gentoo bug 676264, where I submitted version bump & build fix patches to fix security issues and was ignored for three months.

In Gentoo, glsa-check only tells you about security vulnerabilities after there's a portage update that would resolve it. I.e., for those three months, all Gentoo users had a ghostscript with widely-known vulnerabilities and glsa-check was silent about it. I'm not cherry-picking this example—this was one of my first attempts to help be proactive about security updates & found that the process is not fit for purpose. And most fixed vulnerabilities don't even get GLSA advisories—the advisories have to be created manually. Awhile back, I had made a 'gentle update' script that just updated packages glsa-check complained about. It turns out that's not very useful.

Contrast this with vulnix, a tool in Nix/NixOS which directly fetches the vulnerability database from nvd.nist.gov (with appropriate polite local caching) and directly checks locally installed software against it. You don't need the Nix project to do anything for this to Just Work; it's always comprehensive. I made a NixOS upgrade script that uses vulnix to show me a diff of security issues as it does a channel update. Example output:

commit ...

Author: <me>

Date: Sat Jun 17 2023

New pins for security fixes

-9.8 CVE-2023-34152 imagemagick

-7.8 CVE-2023-34153 imagemagick

-7.5 CVE-2023-32067 c-ares

-7.5 CVE-2023-28319 curl

-7.5 CVE-2023-2650 openssl

-7.5 CVE-2023-2617 opencv

-7.5 CVE-2023-0464 openssl

-6.5 CVE-2023-31147 c-ares

-6.5 CVE-2023-31124 c-ares

-6.5 CVE-2023-1972 binutils

-6.4 CVE-2023-31130 c-ares

-5.9 CVE-2023-32570 dav1d

-5.9 CVE-2023-28321 curl

-5.9 CVE-2023-28320 curl

-5.9 CVE-2023-1255 openssl

-5.5 CVE-2023-34151 imagemagick

-5.5 CVE-2023-32324 cups

-5.3 CVE-2023-0466 openssl

-5.3 CVE-2023-0465 openssl

-3.7 CVE-2023-28322 curl

diff --git a/channels b/channels

--- a/channels

+++ b/channels

@@ -8,23 +8,23 @@ [nixos]

git_repo = https://github.com/NixOS/nixpkgs.git

git_ref = release-23.05

-git_revision = 3a70dd92993182f8e514700ccf5b1ae9fc8a3b8d

-release_name = nixos-23.05.419.3a70dd92993

-tarball_url = https://releases.nixos.org/nixos/23.05/nixos-23.05.419.3a70dd92993/nixexprs.tar.xz

-tarball_sha256 = 1e3a214cb6b0a221b3fc0f0315bc5fcc981e69fec9cd5d8a9db847c2fae27907

+git_revision = c7ff1b9b95620ce8728c0d7bd501c458e6da9e04

+release_name = nixos-23.05.1092.c7ff1b9b956

+tarball_url = https://releases.nixos.org/nixos/23.05/nixos-23.05.1092.c7ff1b9b956/nixexprs.tar.xz

+tarball_sha256 = 8b32a316eb08c567aa93b6b0e1622b1cc29504bc068e5b1c3af8a9b81dafcd12

chkno 1 year ago • 100%

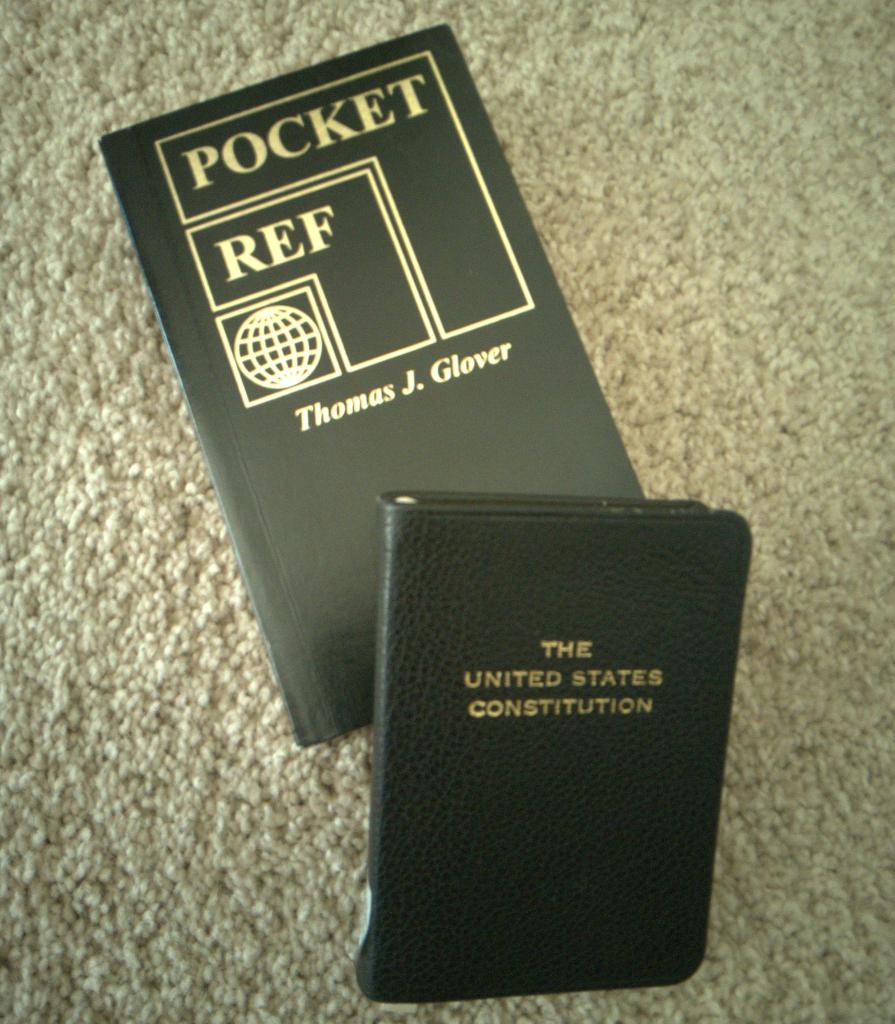

Pocket Ref & pocket US Constitution.

chkno 1 year ago • 100%

The benefit of using something fancier than rsync is that you get a point-in-time recovery capability.

For example, if you switch the enclosures weekly, rsync gives you two recovery options: restore to yesterday's state (from the enclosure not in the safe) and restore to a state from 2-7 days ago (from the one in the safe, depending on when it went into the safe).

Daily incremental backups with a fancy tool like dar let you restore to any previous state. Instead of two options, you have hundreds of options, one for each day. This is useful when you mess up something in the archive (eg: accidentally delete or overwrite it) and don't notice right away: It appeared, was ok for awhile, then it was bad/gone and that bad/gone state was backed up. It's nice to be able to jump back in time to the brief it-was-ok state & pluck the content back out.

If you have other protections against accidental overwrite (like you only back up git repos that already capture the full history, and you git fsck them regularly) — then the fancier tools don't provide much benefit.

I just assumed that you'd want this capability because many folks do and it's fairly easy to get with modern tools, but if rsync is working for you, no need to change.

chkno 1 year ago • 100%

Sounds fine?

Yes: Treat the two enclosures independently and symmetrically, such that you can fully restore from either one (the only difference would be that the one in the safe is slightly stale) and the ongoing upkeep is just:

- Think: "Oh, it's been awhile since I did a swap" (or use a calendar or something)

- Unplug the drive at the computer.

- Cary it to the safe.

- Open the safe.

- Take the drive in the safe out.

- Put the other drive in the safe.

- Close the safe.

- Cary the other drive to the computer.

- Plug it in.

- (Maybe: authenticate for the drive encryption if you use normal full-disk encryption & don't cache the credential)

If I assume a normal incremental backup setup, both enclosures would have a full backup and a pile of incremental backups. For example, if swapped every three days:

Enclosure A Enclosure B

----------------- ---------------

a-full-2023-07-01

a-incr-2023-07-02

a-incr-2023-07-03

b-full-2023-07-04

b-incr-2023-07-05

b-incr-2023-07-06

a-incr-2023-07-07

a-incr-2023-07-08

a-incr-2023-07-09

b-incr-2023-07-10

b-incr-2023-07-11

b-incr-2023-07-12

a-incr-2023-07-13

....

The thing taking the backups need not even detect or care which enclosure is plugged in -- it just uses the last incremental on that enclosure to determine what's changed & needs to be included in the next incremental.

Nothing need care about the number or identity of enclosures: You could add a third if, for example, you found an offsite location you trust. Or when one of them eventually fails, you'd just start using a new one & everything would Just Work. Or, if you want to discard history (eg: to get back the storage space used by deleted files), you could just wipe one of them & let it automatically make a new full backup.

Are you asking for help with software? This could be as simple as dar and a shell script.

My personal preference is to tell the enclosure to not try any fancy RAID stuff & just present all the drives directly to the host, and then let the host do the RAID stuff (with lvm or zfs or whatever), but I understand opinions differ. I like knowing I can always use any other enclosure or just plug the drives in directly if/when the enclosure dies.

I notice you didn't mention encryption, maybe because that's obvious these days? There's an interesting choice here, though: You can do normal full-disk encryption, or you could encrypt the archives individually. Dar actually has an interesting feature here I haven't seen in any other backup tool: If you keep a small --aux file with the metadata needed for determining what will need to go in the next incremental, dar can encrypt the backup archives asymmetrically to a GPG key. This allows you to separate the capability of writing backups and the capability of reading backups. This is neat, but mostly unimportant because the backup is mostly just a copy of what's on the host. It comes into play only when accessing historical files that have been deleted on the host but are still recoverable from point-in-time restore from the incremental archives -- this becomes possible only with the private key, which is not used or needed by any of the backup automation, and so is not kept on the host. (You could also, of course, do both full-disk encryption and per-archive encryption if you want the neat separate-credential for deleted files trick and also don't want to leak metadata about when backups happen and how large the incremental archives are / how much changed.) (If you don't full-disk-encrypt the enclosure & rely only on the per-archive encryption, you'd want to keep the small --aux files on the host, not on the enclosure. The automation would need to keep one --aux file per enclosure, & for this narrow case, it would need to identify the enclosures to make sure it uses that enclosure's --aux file when generating the incremental archive.)

chkno 1 year ago • 100%

I use a small shell script

chkno 1 year ago • 100%

Whatever you want? / It probably doesn't matter. / If it's not causing pain why mess with it?

When using nix on non-nixOS, it may be tricky to get the OS to use the nix-managed packages for core system / early boot stuff like linux (the kernel), systemd, agetty, xdm, etc., but usually there's no need to. Nix packages bring all their needed dependencies, so even if nixpkgs is using a newer libc or something that's not compatible with the system libc, things will still mostly* Just Work because the nix-built packages know exactly where to look for the nix-managed libc they were built with.

(* Notable exception: OpenGL drivers, which for reasons are selected at runtime rather than build-time. See issue 31189 for some history.)

chkno 1 year ago • 100%

chkno 1 year ago • 100%

If you can get state boundary image data coincident with height map data (such as by taking two screenshots on the USGS website, one with the heightmap data opaque and with it translucent, without panning or zooming between), you could use a normal image editor (eg: GIMP) to mask the height map data so that it's zero (black) outside the state boundary and at least slightly gray inside the state boundary. With OpenSCAD's surface, this would give you a rectangle that's flat outside the state and at some minimum height inside the state. You could then use one difference or intersection to cut across the model by height, trimming off the flat rectangular base.

(I.e., doing the trimming the image seems much easier than trimming an STL, & would totally work.)

chkno 1 year ago • 100%

Not as long as I'm careful to keep the the blade flat against the bed. The corners would likely gouge if I applied force with only the corner touching the bed, so I don't do that.

I suppose the corners would gouge if the tool was treated roughly & the corners were to become bent such that they no longer lined up perfectly with the rest of the blade, so I don't do that either.

:)

chkno 1 year ago • 100%

As a bed scraper, I use a putty knife that I've sharpened on one side (chisel grind, #4).

Before printing too-sticky materials (like TPU on my PEI bed), I put down a layer of glue stick. This is sticky enough for successful prints, but easily removed at the end of the print.

chkno 1 year ago • 100%

Have the thing that uses obj take it as a normal constructor argument, and have a convenience wrapper that supplies it:

from contextlib import contextmanager

@contextmanager

def context():

x = ['hi']

yield x

x[0] = 'there'

class ObjUser:

def __init__(self, obj):

self.obj = obj

def use_obj(self):

print(self.obj)

@contextmanager

def MakeObjUser():

with context() as obj:

yield ObjUser(obj)

with MakeObjUser() as y:

y.use_obj()

chkno 1 year ago • 100%

-

Paper tokens: Produce 100 billion authentication tokens (could be passwords, could be private keys of signed certificates), print them on thick paper, fold them up, publicly stir them in giant vats at their central manufacturing location before distributing them to show that no record is being kept of where each token is being geographically routed to, and then have them freely available in giant buckets at any establishment that already does age-checks for any other reason (bars, grocery stores that sell alcohol or tobacco, etc.). The customer does the usual age-verification ritual, then reaches into the bucket and themselves randomly selects any reasonable number of paper tokens to take with them. It should be obvious to all parties that no record is being kept of which human took which token.

-

Require these tokens to be used for something besides mature-content access. Maybe for filing your taxes, opening bank accounts, voting, or online alcohol / tobacco purchases. This way, people requesting these tokens do not divulge that they are mature-content consumers.

chkno 1 year ago • 100%

Check out Winston Moy's video on making a topographic map of Colorado. They mention these data sources:

- The one you already found

- The U.S. Geological Survey (USGS)

- Terrain2STL, which uses Consultative Group for International Agricultural Research - Consortium for Spatial Information (CGIAR-CSI) data

OpenSCAD's surface primitive can turn height maps into STL files.

You may have to trim the state boundaries yourself. :(

chkno 1 year ago • 100%

My stock Prusa i3 MK2s from 2017 is still in regular use & still works great. Not sure if this counts as 'old'.

chkno 1 year ago • 100%

If you haven't read through them already:

- Nixpkgs documentation on packaging javascript things

- Nixpkgs wiki pages for Node.js and Language-specific package helpers

- Search for relevant issues and PRs: github.com/NixOS/nixpkgs/issues?q=cockpit

It looks like cockpit has been packaged and moduled. Maybe look at cockpit's package and module definitions for inspiration for cockpit-machines? It looks like cockpit is a hybrid python+javascript project and has been packaged primarily as a python package, and cockpit-machines is also a hybrid python+javascript project but maybe the python aspect is less central, as I don't see a top-level pyproject.toml, so you may not be able to exactly mimic the cockpit package?

chkno 1 year ago • 100%

chkno 1 year ago • 100%

Any sane compiler will simplify this into

function cosmicRayDetector() {

while(true) {

}

}

C++ may further 'simplify' this into

function cosmicRayDetector() {

return

}

chkno 1 year ago • 100%

I accidentally the timezone.

chkno 1 year ago • 80%

I got a Prusa (MK2s) six years ago and it's been really, really great. Unfortunately, your budget restriction rules out the current model.

If you can get access to a printer at a friend's house, a library, a school, a workplace, a makerspace, etc., I would suggest not buying a printer while totally new to 3D printing. Do some 3D printing for awhile. Then, on the basis of that experience, decide if you want to commit to having a good printer at a slightly higher price point.

400€ is really very restrictive. Commercial 3D printers are 10,000€ to 100,000€. Prusa's printers are extremely good for their modest ~900€ price.

If you do decide to get a Prusa, I recommend the kit over the assembled option. It's cheaper, and the familiarity you get with its components and construction during assembly gives you the power of fearless repair and tinkering -- you've already assembled it once, so disassembling it and reassembling it for repair or upgrade is no big deal.

chkno 1 year ago • 100%

I'm currently reading Thomas C. Schelling's The Strategy of Conflict. So far, it seems to be presenting a wide range of mechanisms as actual possible strategies, when I would have expected these mechanisms to be cataloged solely for establishing common knowledge of possible counterfactuals best avoided. I'm beginning to be concerned that I'll get more positive examples of how one ought to actually comport themselves in this domain from BDSM Decision Theory D&D Fanfic than from this historical, venerable, seminal work on the subject.

chkno 1 year ago • 100%

Parents of young children read children's books to children, and this all well and good, but before this there is a special, magical time where parents of especially young children get to read whatever books they fancy to the child. Especially young children still love being read to, still get all the same benefits of learning phonemes and word splitting, and were never going to follow the story no matter how simple, so you just get to read whatever you wanted to read anyway & it doubles as child storytime.