vidarh

3 months ago

•

93%

vidarh

3 months ago

•

93%

The age matters less than the power-dynamics of her being his nanny.

vidarh

8 months ago

•

100%

vidarh

8 months ago

•

100%

I'm not sure if "optimized" is the right word for my stack at the moment. Optimized in the sense that it is small, sure, but it does come at a performance cost - as much as I love Ruby, e.g. doing font rendering in Ruby is only viable because you only need to render each glyph once per size you use, for example. But I feel the performance tradeoff is acceptable. For me at least.

The terminal is also nothing "special" yet, other than the fact it's written in Ruby, and uses that Ruby font-renderer. It needs some serious bug fixes and cleanups and then that too will go on Github.

For me the tradeoff is that I get full control, and there are a few things I want to experiment with:

-

Since it can parse escape codes, there's nothing preventing a thin IO wrapper so it's possible to use the backend to output to an X11 window. Benefits of that would be being able to e.g. use part of a window for text output while rendering other things in the rest of the window, or plugging in your own code to augment the rendering in various ways.

-

But if you do that, you can strip out the escape code parsing, or bypass it, and use the underlying terminal buffer for the same purpose. E.g. my text editor already renders to a terminal-like buffer, and so when running under X it'd save going through the terminal pipeline, and I'd have the option of "upgrading" its rendering while keeping most of it pure text.

-

I'd like to play with ways to do filtering and post-processing of content. E.g. highlighting based on running Ruby code over the output.

Especially since a large part of my use is my editor, augmenting the backend with support for small "upgrades" w/GUI features, like letting Ruby apps that pull in the backend control and respond to a scrollbar in the terminal, or "replace" the scrollback buffer w/control over the editors buffer, or plugins to add a minimap, to make it really easy to write Ruby apps that work in any terminal but that can get extra features when it can open its own windows would be interesting.

vidarh

8 months ago

•

100%

vidarh

8 months ago

•

100%

At this point my window manager, terminal, file manager, and text editor, as well as a bunch of utilities like contextual popup-menus for my file manager (similar to 9menu, fed by a script) are written in pure Ruby, including the X11 client bindings and the TrueType font-renderer. I really would love to see Ruby get more use outside of Rails, as I have no interest in Rails, and Ruby has a lot to offer there. E.g. you might think it'd be too slow for a font-renderer, and while it's slow-ish you only need to render each glyph once per size as you use them so it works just fine and the whole font renderer is only 588 lines currently... Extend this across many of your main tools and you gain a system far easier to understand and modify to my own needs. E.g. my terminal is about 1800 lines of code. Xterm is about 88,000. Of course xterm does more, but most of things I don't need. Trying to add features I want to xterm would be a massive pain; adding it to 1800 lines of Ruby on the other hand is comparatively easy.

I'm slowly packaging up more of these tools, but the big caveat is that I'm not really writing these "for users" but for my own use, and I have peculiar preferences (e.g. very minimalist) and so these would not be pleasant for others to actually use, hence the over the top warnings :)

It's surprisingly easy to get an absolutely minimal wm working, though. E.g. this was my very first version (based on a C example called TinyWM): https://gist.github.com/vidarh/1cdbfcdf3cfd8d25a247243963e55a66

That is in fact all you need to do for a minimalist wm (that one is "just" floating and just a single desktop).

99% of the pain past that is learning all the quirks of how X11 works more so than the rest of the logic. E.g. after restarting it last night, for some reason the grab of the windows key + mouse button "broke" without a single code change on my end. I'm doing something wrong, clearly, but last time I ran into this it eventually "resolved itself", so it's hard to debug...

But to use this at this point you really need to actually enjoy chasing down those things. Hopefully it'll get closer to something usable for other people at some point down the line.

What the title says. It's <1k lines of Ruby, and provides a basic tiling WM w/some support for floating windows. It's minimalist, likely still buggy and definitely lacking in features, but some might find it interesting. It is actually the WM I use day to day

It never ceases to amaze me how trivial it is to get temporary control over a phone number, *or* that given how trivial it is that anyone trusts it for any kind of verification, and so as hilarious it is that the SEC didn't have 2FA set up, it's rather rich for X to claim it's nothing to do with them when they choose to trust a demonstrably unreliable method of proving ownership....

www.macrumors.com

www.macrumors.com

vidarh

8 months ago

•

50%

vidarh

8 months ago

•

50%

Except if they were it'd be well known, and no startup typically has contracts that doesn't involve approvals for secondary sales at this kind of early stage because increasing the number of people on the cap table enough triggers nearly the same reporting requirements as being public, and is a massive burden. Just doesn't work that way.

It's also hilarious that you take posting an article that is at best neutral, with a message of doom and gloom about risks to their business, on Lemmy is something OpenAI would have any interest in. If I wanted to pump OpenAI there are better places to do it, and more positive spins to put on it.

vidarh

8 months ago

•

50%

vidarh

8 months ago

•

50%

Lol, what. OpenAI shares aren't available - there'd be no benefit to anyone trying to pump them.

vidarh

8 months ago

•

66%

vidarh

8 months ago

•

66%

You can't really trust anything a human says because we're frequently wrong yet convinced we're right, or not nearly as competent as we think, yet we manage, because in a whole lot of endeavours being right often enough and being able to verify answers is sufficient.

There are plenty of situations where they are "right enough" and/or where checking the output is trivial enough. E.g. for software development, where I can easily tell if the output is "right enough", and where humans are often wrong, and where we rely on tests to verify correctness anyway.

Having to cross-check results is a nuisance, but when I can e.g. run things past it on subjects I know well enough to tell if the answers are bullshit and where it can often produce answers better than a lot of actual software developers, it's worth it. E.g. I recently had it give me a refresher on the algorithms to convert an Non-deterministic finite automata (NFA) to a deterministic finite automata (DFA) and it explained it perfectly (which is not a surprise; there will be plenty of material on that subject), but unlike if I'd just looked it up in google, I could also construct examples to test that I remembered it right and have it produce the expected output (which, yes, I verified was correct).

I also regularly has it write full functions. I have a web application where it has written ca 80% of the code without intervention from me. Plenty of my libraries now have functions it has written.

I use it regularly. It's saving me more than enough time to justify both the subscription to ChatGPT and API fees for other use.

As such, it is "actually useful" for me, and for many others.

www.androidcentral.com

www.androidcentral.com

vidarh

8 months ago

•

66%

vidarh

8 months ago

•

66%

Bubble in the sense that "many companies will fail" we can agree on. Companies like OpenAI will survive - lawsuits or not - and even if they were to fail due to the lawsuits the algorithms are known and e.g. Microsoft, who has a license to the tech would just hire the team and start over and let the corporate entity go bankrupt.

But all of the "ChatGPT for field X" companies that are just razor-thin layers on top of OpenAI's API, sure, they will almost all fail, and the only ones of them that won't will be the ones that leverages initial investment into an opportunity to quickly pivot into something more substantial.

A lot of people talk about AI as a bubble in the sense of believing the tech will go away, though, and that will never happen, because it's useful enough.

Regarding OpenAI's market cap, I don't agree - I think it'll increase far more, unless they massively misstep, because even though it's riding high on hype, they also still have big lead not down to their hype but down to actually being significantly ahead of even competitors like Google, and given the high P/E ratios in tech they don't need to be the backend all that many big deployments behind big companies even just to field really stupid-simple uses that don't really need the capabilities of GPT before they'll justify that valuation.

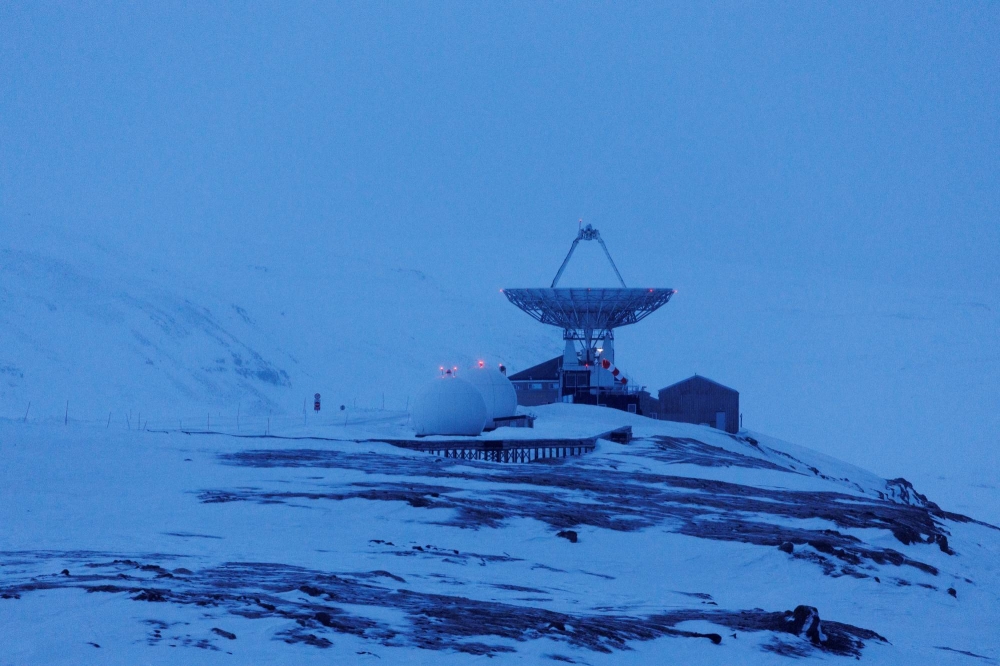

www.tomshardware.com

www.tomshardware.com

The world's fastest supercomputer blasts through one trillion parameter model with only 8 percent of its MI250X GPUs

vidarh

8 months ago

•

57%

vidarh

8 months ago

•

57%

Whenever I see them described as "plagiarism machines", odds are about 99% that the person using the term have no idea how these models work. Like with humans, they can overfit, but most of what they output will have have far less in common with any individual work than levels of imitations people engage in without being accused of plagiarism all the time.

As for the environmental effects, it's a totally ridiculous claim - the GPUs used to train even the top of the line ChatGPT models adds up to a tiny rounding error of the power use of even middling online games, and training has only gotten more efficient since.

E.g. researchers at Oak Ridge National Labs published a paper in December after having trained a GPT4 scale model with only 3k GPUs on the Frontier supercomputer using Megatron-DeepSpeed. 3k GPUs is about 8% of Frontiers capacity, and while Frontier is currently fastest, there are hundreds of supercomputers at that kind of scale publicly known about, and many more that are not. Never mind the many millions of GPUs not part of any supercomputer.

vidarh

8 months ago

•

83%

vidarh

8 months ago

•

83%

You can't. The cat is out of the bag. The algorithms are well understood, and new papers on ways to improve output of far smaller models come out every day. It's just a question of time before training competitive models will be doable for companies in a whole range of jurisdictions entirely unlikely to care.

vidarh

8 months ago

•

50%

vidarh

8 months ago

•

50%

Why do you think anything will "burst"? If anything, if licensing requirements for contents makes training expensive it's likely to make the biggest existing players far more valuable.

vidarh

8 months ago

•

100%

vidarh

8 months ago

•

100%

Possibly. On the other hand, OpenAI's market cap is bigger than the ten largest publishers combined - despite their whining they can afford to. It's not OpenAI that will be prevented from getting training data - the biggest impact will be that it might stop smaller competitors and prevent open-source models.

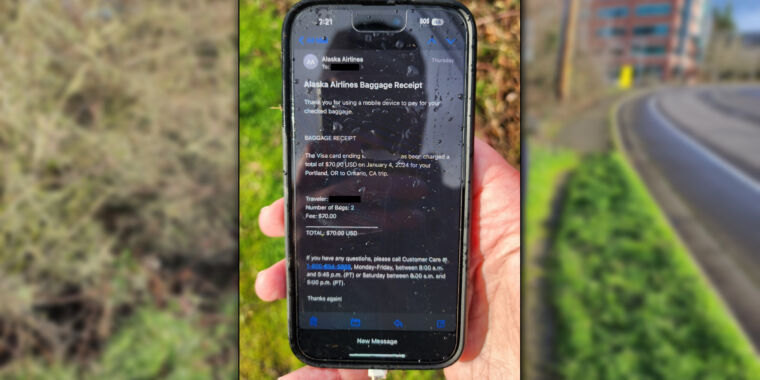

arstechnica.com

arstechnica.com

*I* would still manage to break it with ease one week after purchase (and so will stick with my cheap Android phones)

www.theguardian.com

www.theguardian.com

www.theverge.com

www.theverge.com

"I can't let you drive there, Dave". As much as I love playing with ChatGPT, fuck this.

cross-posted from: https://lemmy.stad.social/post/44851 > Last time I saw these together she (left) chased him out of the garden at high speed. Apparently they are now best friends and looked annoyed when they noticed me staring at them.

Last time I saw these together she (left) chased him out of the garden at high speed. Apparently they are now best friends and looked annoyed when they noticed me staring at them.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Seems better behaved than many commuters I've run into.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

I feel like you are one of the people who feel that AI is just going to be the future with no real problems to anyone who matters. We can’t stop it, we can’t regulate it in any way whatever; and people should just move out of the way, give up and if they can’t find a place in the new world, die already. Artists don’t matter, writers don’t matter and anyone impacted by this new system doesn’t matter. The algorithm is all that matters.

If I thought that, I wouldn't have emphasised the need to sort out the funding issue, and argued that just regulation will be insufficient to solve it.

I think it will cause a massive degree of upheaval. I don't think regulation has any hope in hell of preventing upheaval significant enough that unless a solution is found to ensure better distribution of wealth it will cause violence and uprisings and governments to fall. Not necessarily in and of itself, but in accelerating a process of reducing the monetary value of labour.

I can’t know anything about LLMs, machine learning or anything about this.

I've not suggested anything of the sort.

How you can interpret anything I've written as suggesting I don't think there will be problems is beyond me.

You therefore throw out the idea that bias exists due to tagging systems.

I've done no such thing.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Quick iteration is definitely the big thing. (The eye is fun because it's so "badly designed" - we're stuck in a local maxima that just happens to be "good enough" for us to not overcome the big glaring problems)

And yes, if all the inputs are corrupted, the output will likely be too. But 1) they won't all be, and as long as there's a good mix that will "teach" the network over time that the difference between a "corrupted cat" and an "uncorrupted cat" are irrelevant, because both will have most of the same labels associated with them. 2) these tools work by introducing corruption that humans aren't meant to notice, so if the output has the same kind of corruption it doesn't matter. It only matters to the extent the network "miscorrupts" the output in ways we do notice enough so that it becomes a cost drag on training to train it out.

But you can improve on that pretty much with feedback: Train a small network to recognize corruption, and then feed corrupted images back in as negative examples to teach it that those specific things are particularly bad.

Picking up and labelling small sample sets of types of corruption humans will notice is pretty much the worst case realistic effect these tools will end up having. But each such countermeasure will contribute to training sets that make further corruption progressively harder. Ultimately these tools are strictly limited because they can't introduce anything that makes the images uglier to humans, and so you "just" need to teach the models more about the limits of human vision, and in the long run that will benefit the models in any case.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

So what you are saying is open ai should get the public grants for artists to give to artists?

No. What in the world gave you that idea? I'm saying artists or companies employing artists should get grants, just like is the case for a large number of grants now. I'm saying I'd like to see more of that to compensate for the effects being liberal about copyright would have.

I understand it isn’t trained for anything, I have done training with them. The training leads to homogeneous outcomes. It had been studied as well. You can look it up.

There is no "the training". There are a huge range of models trained with different intent producing a wide variety in output to the point that some produces output that others will just plain refuse.

Dall-e 3 still isn’t good enough to be competitive.

Dall-E 3 isn't anywhere near leading edge of diffusion models. It's OpenAI playing catch up. Now, neither Midjourney or Firefly, nor any of the plethora of Stable Diffusion derived models are good enough to be competitive with everyone without significant effort either, today, but that is also entirely irrelevant. Diffusion models are two years old, and the pace of the progress have been staggering, to the point where we e.g. already have had plenty of book-covers and the like using them. Part of the reason for that is that you can continue training of a decent diffusion model even on a a somewhat beefy home machine and get a model that fits your needs better to an extent you can't yet do with LLMs.

Asking and crediting would go a long way to help fix the financial challenge. Because it is a start to adding a financial component. If you have to credit someone there becomes an obligation to that person.

If there is a chance crediting someone will lead to a financial obligation, people will very quickly do the math on how cheaply they can buy works for hire instead. And the vast bulk of this is a one-off cost. You don't need to continue adding images to teach the models already known thing, so the potential payout on the basis of creating some sort of obligation. Any plan for fixing the financial challenge that hinges on copyright is a lost cause from the start because unless it's a pittance it creates an inherent incentive for AI companies to buy themselves out of that obligation instead. It won't be expensive.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

I don’t see these grants or public funding ever covering a private company for one.

Companies are by far the largest recipients of public funding for art in many countries and sectors. Especially for e.g. movie production in smaller languages, but also in other sectors.

And for two, I don’t see AI art ever actually getting to the point where it fully replaces artists.

I do agree it won't fully replace artists, but not because it won't get to the point where it can be better than everyone, but because a huge part of art is provenance. A "better Mona Lisa" isn't worth anything, while the original is priceless, not because a "better" one isn't possible, but because it's not painted by Da Vinci.

But that will only help an even narrower sliver than the artists who are making good money today.

It will take time, but AI will eat far more fields than art, and we haven't even started to see the fallout yet.

Because it is trained to make a homogeneous rendering of what you are looking for

Diffusion models are not trained "for" anything other than matching vectors to denoising to within your own tolerance levels of matching to what you are looking for. Accordingly, you'll see a whole swathe of models tuned on more specific types of imagery, and tooling to more precisely control what they generate. The "basic" web interfaces are just scratching the surface of what you can do with e.g. Controlnet and the like. It will take time before they get good enough, sure. They are also only 2 years old, and people have only been working on tooling around then for much less than that.

Open AI might be sitting on Microsoft money, but how many other companies has Microsoft gobbled up over the years? Open AI if it starts to struggle will just fall under the Microsoft umbrella and become part of its massive conglomerate, integrated into it. Where are our AR goggles that we are supposed to all be wearing, Microsoft and Google both had those? So many projects grow and die with multiple millions thrown at them. All end up with crazy valuations based on future consumer usage. As we all can’t even afford rent.

OpenAI is just one of many in this space already. They are in the lead for LLMs, that is text-based models. But even that lead is rapidly eroding. They don't have any obvious lead for diffusion models for images. Having used several, it was first with the recent release of DallE 3 that it got "good enough" to be competitive.

At the same time there are now open models getting close enough to be useful, so even if every AI startup in the world collapsed this won't go away.

There is also this idea that people wouldn’t willing contribute if just asked.

That's fine, but that doesn't fix the financial challenge.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

It doesn't need to "develop its own style". That's the point. The more examples of these adversarial images are in the training set, the better it will learn to disregard the adversarial modifications, and still learn the same style. As much as you might want to stop it from learning a given style, as long as the style can be seen, it can be copied - both by humans and AI's.

vidarh

11 months ago

•

60%

vidarh

11 months ago

•

60%

As long as people aren't ready for it, then it doesn't solve the immediate problem that needs to be solved today.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

If you work on commission only online, or never went to art school those won’t cover you.

There's no reason it has to stay like that. And most people in that position are not making a living from art as it is; expanding public funding to cover a large proportion of working artists at a better level than today would cost a pittance.

These large tech companies become so highly valued at the start because of venture capital and then in 5-10 years collapse under their own weight. How many of these have come up and are now close to drowning after pushing out all competitors? Sorry if I’m not excited about an infusion of cash into a large for profit company that is just gobbling up anything anyone posts online without consent to make a quick buck.

MS, Apple, Meta, Google etc. are massively profitable. OpenAI is not, but sitting on a huge hoard of Microsoft cash. It doesn't matter that many are close to drowning. The point is the amount of cash floating around that enable the big tech companies to outright buy more than enough content if they have to means that regulation to prevent them from gobbling up anything anyone posts online without consent will not stop them. So that isn't a solution. It will stop new entrants with little cash, but not the big ones. And even OpenAI can afford to buy up some of the largest content owners in the world.

The point was not to make you excited about that, but to illustrate that fighting a battle to restrict what they can train on is fighting a battle that the big AI companies won't care if they lose - they might even be better off if they lose, because if they lose, while they'll need to pay more money to buy content, they won't have competition from open models or new startups for a while.

So we need to find other solutions, because whether or not we regulate copyright to training data, these models will continue to improve. The cat is out of the bag, and the computational cost to improving these models keeps dropping. We're also just a few years away from people being able to train models competitive to present-day models on computers within reach of hobbyists, so even if we were to ban these models outright artists will soon compete with output from them anyway, no matter the legality.

Focusing on the copyright issue is a distraction from focusing on ensuring there is funding for art. One presumes the survival of only one specific model that doesn't really work very well even today and which is set to fail irrespective of regulation, while the latter opens up the conversation to a much broader set of options and has at least a chance of providing working possibilities.

vidarh

11 months ago

•

71%

vidarh

11 months ago

•

71%

I live in the UK and I don't drink beer because I generally, ironically, think beer overall tastes like piss, and yet I still know Tsingtao. It has fairly substantial market recognition in a lot of countries.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

I doesn't need to be full on UBI. In a lot of countries grants mechanisms and public purchasing mechanisms for art already make up a significant proportion of income for artists. Especially in smaller countries, this is very common (more so for literary works, movies and music where language provides a significant barrier to accessing a bigger audience, but for other art too). Imagine perhaps a tax/compulsory licensing mechanism that doesn't stop AI training but instead massively expands those funding sources for people whose data are included in training sets.

This is not stoppable, not least because it's "too cheap" to buy content outright.

I pointed out elsewhere that e.g. OpenAI could buy all of Getty Images for ~2% of their currently estimated market cap based on a rumoured recent cash infusion. Financing vast amounts of works for hire just creates a moat for smaller players while the big players will still be able to keep improving their models.

As such it will do nothing to protect established artists, so we need expansion of ways to fund artists whether or not inclusion of copyrighted works in training sets becomes restricted.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

You wouldn't want to. If you just feed it to the models, then if there are enough of these images to matter the model will learn to ignore the differences. You very specifically don't want to prevent the model from learning to overcome these things, exactly because if you do you're stuck with workarounds like that forever, but if you don't the model will just become more robust to noisy data like this.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

An AI model will "notice them" but ignore them if trained on enough copies with them to learn that they're not significant.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Yes: Train on more images processed by this.

In other words: If the tool becomes popular it will be self-defeating by producing a large corpus of images teaching future models to ignore the noise it introduces.

There are likely easier "quick fixes" while waiting for new models, but this is the general fix that will work against almost any adversarial attack like this.

There might be theoretical attacks that'd be somewhat more difficult to overcome to the extent of requiring tweaks to the models, but given that there demonstrably exists a way of translating text to images that overcomes any such adversarial method that isn't noticeable to humans, given that humans can, there will inherently always be a way to beat them.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

That's hilarious, given that if these tools become remotely popular the users of the tools will provide enough adversarial data for the training to overcome them all by itself, so there's little reason to anyone with access to A100's to bother trying - they'll either be a minor nuisance used a by a tiny number of people, or be self-defeating.

vidarh

11 months ago

•

75%

vidarh

11 months ago

•

75%

To me, that's not an argument for regulating AI, though, because most regulation we can come up with will benefit those with deep enough pockets to buy themselves out of the problem, while solving nothing.

E.g. as I've pointed out in other debates like this, Getty Images has a market cap of <$2bn. OpenAI may have had a valuation in the $90bn range. Google, MS, Adobe all also have shares prices that would trivially allow them to purchase someone like Getty to get ownership of a large training set of photos. Adobe already has rights to a huge selection via their own stock service.

Bertelsmann owns Penguin Random-House and a range ofter publishing subsidiaries. It's market cap is around 15 billion Euro. Also well within price for a large AI contender to buy to be able to insert clauses about AI rights. (You think authors will refuse to accept that? All but the top sellers will generally be unable to afford to turn down a publishing deal, especially if it's sugar-coated enough, but they also sit on a shit-ton of works where the source text is out-of-copyright but they own the right to the translations outright as works-for-hire)

That's before considering simply hiring a bunch of writers and artists to produce data for hire.

So any regulation you put in place to limit the use of copyrighted works only creates a "tax" effectively.

E.g. OpenAI might not be able to copy artist X's images, but they'll be able to hire artist Y on the cheap to churn out art in artist X's style for hire, and then train on that. They might not be able to use author Z's work, but they can hire a bunch of hungry writers (published books sells ca 200 copies on average; the average full time author in the UK earns below minimum wage from their writing) as a content farm.

The net result for most creators will be the same.

Even wonder why Sam Altmann of OpenAI has been lobbying about the dangers of AI? This is why. And its just the start. As soon as these companies have enough capital to buy themselves access for data, regulations preventing training on copyrighted data will be them pulling up the drawbridge and making it cost-prohibitive for people to build open, publicly accessible models in ways that can be legally used.

And in doing so they'll effectively get to charge an "AI tax" on everyone else.

If we're going to protect artists, we'd be far better off finding other ways of compensating them for the effects, not least because it will actually provide them some protection.

vidarh

11 months ago

•

80%

vidarh

11 months ago

•

80%

You can see the difference in the process in the results, for example in how some generated pictures will contain something like a signature in the corner

If you were to train human children on an endless series of pictures with signatures in the corner, do you seriously think they'd not emulate signatures in the corner?

If you think that, you haven't seen many children's drawings, because children also often pick up that it's normal to put something in the corner, despite the fact that to children pictures with signatures is a tiny proportion of visual input.

Or how it is at least possible to get the model to output something extremely close to the training data

People also mimic. We often explicitly learn to mimic - e.g. I have my sons art folder right here, full of examples of him being explicitly taught to make direct copies as a means to learn technique.

We just don't have very good memory. This is an argument for a difference in ability to retain and reproduce inputs, not an argument for a difference in methods.

And again, this is a strawman. It doesn't even begin to try to answer the questions I asked, or the one raised by the person you first responded to.

That at least proves that the process is quite different to the process of human learning.

Neither of those really suggests that all (that diffusion is different to humans learn to generalize images is likely true, what you've described does not provide even the start of any evidence of that), but again that is a strawman.

There was no claim they work the same. The question raised was how the way they're trained is different from how a human learns styles.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

This idea that copyright and IP shouldn’t exist at all is kinda absurd.

For the majority of human existence, that was the default.

Copyright exists as an explicit tradeoff between the rights of the public to be able to do as they please with stuff introduced into the public sphere, and a legal limitation infringing on the publics liberty for a limited time for the purpose of encouraging the creation of more works for the public benefit. It was not introduced as some sort of inherent right, but as a trade between the public and creators to incentivise them.

Stripping it away from existing artists who has come to depend on it without some alternative would be grossly unfair, but there's nothing absurd about wanting to change the bargain over time. After all, that has been done many times, and the copyright we have now is vastly different and far more expansive and lengthy than early copyright protection.

Personally, I'd be in favour of finding alternative means of supporting creators and stripping back copyright as a tradeoff. The vast majority of creators earn next to nothing from their works; only a very tiny minority makes a livable wage of art of any form at all, and of the rest the vast majority of profits take place in a very short period of initial exploitation of a work, so we could allow the vast majority to earn more from their art relatively cheaply, and affect the rest to a relatively limited degree, while benefiting from the reduced restrictions.

vidarh

11 months ago

•

76%

vidarh

11 months ago

•

76%

Society is built to distribute wealth, so that everyone can live a decent life.

As a goal, I admire it, but if you intend this as a description of how things are it'd be boundlessly naive.

vidarh

11 months ago

•

80%

vidarh

11 months ago

•

80%

Human brains clearly work differently than AI, how is this even a question?

It's not all that clear that those differences are qualitatively meaningful, but that is irrelevant to the question they asked, so this is entirely a strawman.

Why does the way AI vs. the brain learn make training AI with art make it different to a person studying art styles? Both learn to generalise features that allows them to reproduce them. Both can do so without copying specific source material.

The term “learning” in machine learning is mainly a metaphor.

How do the way they learn differ from how humans learn? They generalise. They form "world models" of how information relates. They extrapolate.

Also, laws are written with a practical purpose in mind - they are not some universal, purely philosophical construct and never have been.

This is the only uncontroversial part of your answer. The main reason why courts will treat human and AI actions different is simply that they are not human. It will for the foreseeable future have little to do whether the processes are similar enough to how humans do it.

vidarh

11 months ago

•

94%

vidarh

11 months ago

•

94%

They don't even need to detect them - once they are common enough in training datasets the training process will "just" learn that the noise they introduce are not features relevant to the desired output. If there are enough images like that it might eventually generate images with the same features.

vidarh

11 months ago

•

91%

vidarh

11 months ago

•

91%

Trying to detect poisoned images is the wrong approach. Include them in the training set and the training process itself will eventually correct for it.

I think if you build more robust features

Diffusion approaches etc. do not involve any conscious "building" of features in the first place. The features are trained by training the net to match images with text features correctly, and then "just" repeatedly predict how to denoise an image to get closer to a match with the text features. If the input includes poisoned images, so what? It's no different than e.g. compression artifacts, or noise.

These tools all try to counter models trained without images using them in the training set with at most fine-tuning, but all they show is that models trained without having seen many images using that particular tool will struggle.

But in reality, the massive problem with this is that we'd expect any such tool that becomes widespread to be self-defeating, in that they become a source for images that will work their way into the models at a sufficient volume that the model will learn them. In doing so they will make the models more robust against noise and artifacts, and so make the job harder for the next generation of these tools.

In other words, these tools basically act like a manual adversarial training source, and in the long run the main benefit coming out of them will be that they'll prod and probe at failure modes of the models and help remove them.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Largely, I expect, because of the point you make. Needing an actual army of people to control them becomes a limiting factor. Add on to that that requiring remote control makes them vulnerable to jamming, and there's a strong incentive to start making them more and more autonomous both to enable fewer soldiers to control more bots, and to allow them to retain some function without it.

It just largely seems like it will be too significant a temptation.

www.foxnews.com

www.foxnews.com

Phrenology: The discredited, useless, racist pseudo-science, now in your dating app!

globalnews.ca

globalnews.ca

Unsurprising. And I suspect the results wouldn't be very different elsewhere. And that's why we're fucked.

www.alternet.org

www.alternet.org

"All hope lost"? I for home have plenty of hope that this will lead to further hilarity as his vote keeps dropping until they get tired of the circus...

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

I'm just very tickled at how much it backfired - Lewis turned outright anti-Catholic. If I'd been a religious man I might have tried to read something into that (but I'm not, so).

vidarh

11 months ago

•

50%

vidarh

11 months ago

•

50%

Yes, she is free to be a giant asshole with a persecution complex. And we are free to call her one.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

The funny thing is we can blame Tolkien for that. It was Tolkien who got Lewis to convert, though he became a protestant while Tolkien was a Catholic, and hilariously Tolkien found Lewis' use of Christian symbolism too overdone and lacking in subtlety.

vidarh

11 months ago

•

75%

vidarh

11 months ago

•

75%

I've never read the books, but I did enjoy the movies, and it's really disappointing. I have the DVDs, so I guess I could still watch those knowing it won't signal any continued demand the way streaming them would, but still.

www.thedrive.com

www.thedrive.com

Because what could *possibly* go wrong.

www.euronews.com

www.euronews.com

cross-posted from: https://lemmy.world/post/7027429 > ‘Groundbreaking’ bionic arm that fuses with user’s skeleton and nerves could advance amputee care::The bionic arm has been working for years, reducing the user’s level of pain. The first person to receive it tells how life changing it has been.

www.theguardian.com

www.theguardian.com

www.nbcnews.com

www.nbcnews.com

Not the big fish, but every bit helps.

thehill.com

thehill.com

We are truly in the end-times. It's bad enough these two are up against each other, but that Trump 1) isn't behind bars, 2) is being taken seriously by anyone with an IQ above that of a turnip *irrespective of who he is running against, makes me lose all faith in humanity. Or in Americans, at least.

www.japantimes.co.jp

www.japantimes.co.jp

www.theregister.com

www.theregister.com

Who knew they even *had* that many...

www.technologyreview.com

www.technologyreview.com

www.businessinsider.com

www.businessinsider.com

www.lbc.co.uk

www.lbc.co.uk

She's on a roll....

theconversation.com

theconversation.com

Street art near Penge, South London

www.theverge.com

www.theverge.com

I don't mind the idea of charging for a *quality* service the least bit. But that requires offering a quality service *first*.

theconversation.com

theconversation.com

A very long but fascinating overview of different attempts at autonomous, self-driving, and/or demand responsive app integrated public transport options. (ok, so you're probably a bit weird - like me - if you're fascinated by this)

www.bbc.co.uk

www.bbc.co.uk

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Pica is eating things that are not food, but as pointed out in the article I linked, eating dog poo is providing a significant source of nutrition for foxes. In those circumstances, it by definition is not pica.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Pet dogs also eat poo on occasion, also without any underlying problem, so I really don't think there's any reason to think that far less domesticated species where it is well established would just stop. I'm sure you can reduce it, especially if it has a nicer food source, but still, an animal with far less history of domestication seems like a recipe for amplification of all the potential issues you don't want to deal with.

vidarh

11 months ago

•

100%

vidarh

11 months ago

•

100%

Yeah, population density makes a big difference. I grew up in Norway, and don't think I ever saw a fox in the wild in 25 years, but in London it's a regular occurrence. Especially in the suburbs, but I've once seen one dead centre of town too.

cross-posted from: https://lemmy.stad.social/post/23403

Yes, this is one of the full pictures from when the one used for the community avatar was taken. This is a few years back, and not the same fox as the one in the gazebo. I'll post another one from the same set in the comments.

www.bbc.co.uk

www.bbc.co.uk

Either Attenborough's Life on Earth or The Living Planet - not quite sure which one, though I suspect Life on Earth - was the first TV series that got my parents to deviate from the regular bedtime when I was a child in the 1980's...

www.washingtoninstitute.org

www.washingtoninstitute.org

One for the "but they voted for Hamas" apologists for human rights abuses. > In fact, Gazan frustration with Hamas governance is clear; most Gazans expressed a preference for PA administration and security officials over Hamas—the majority of Gazans (70%) supported a proposal of the PA sending “officials and security officers to Gaza to take over the administration there, with Hamas giving up separate armed units,” including 47% who strongly agreed. Nor is this a new view—this proposal has had majority support in Gaza since first polled by The Washington Institute in 2014.